The Real-Time Advantage: Architecting Enterprise AI Voice Platforms with Instant CRM Data Synchronization

In the rapidly evolving landscape of conversational artificial intelligence, the distinction between a simple AI voice agent and a comprehensive enterprise AI voice platform with real-time CRM data integration has become the defining factor for commercial success. For technical evaluators, software architects, and enterprise decision-makers, understanding the architectural imperatives of this technology is no longer optional. Businesses are actively migrating away from legacy interactive voice response (IVR) systems and disjointed chatbot interfaces, seeking robust solutions that act as a seamless extension of their existing customer relationship management (CRM) and applicant tracking system (ATS) infrastructures.

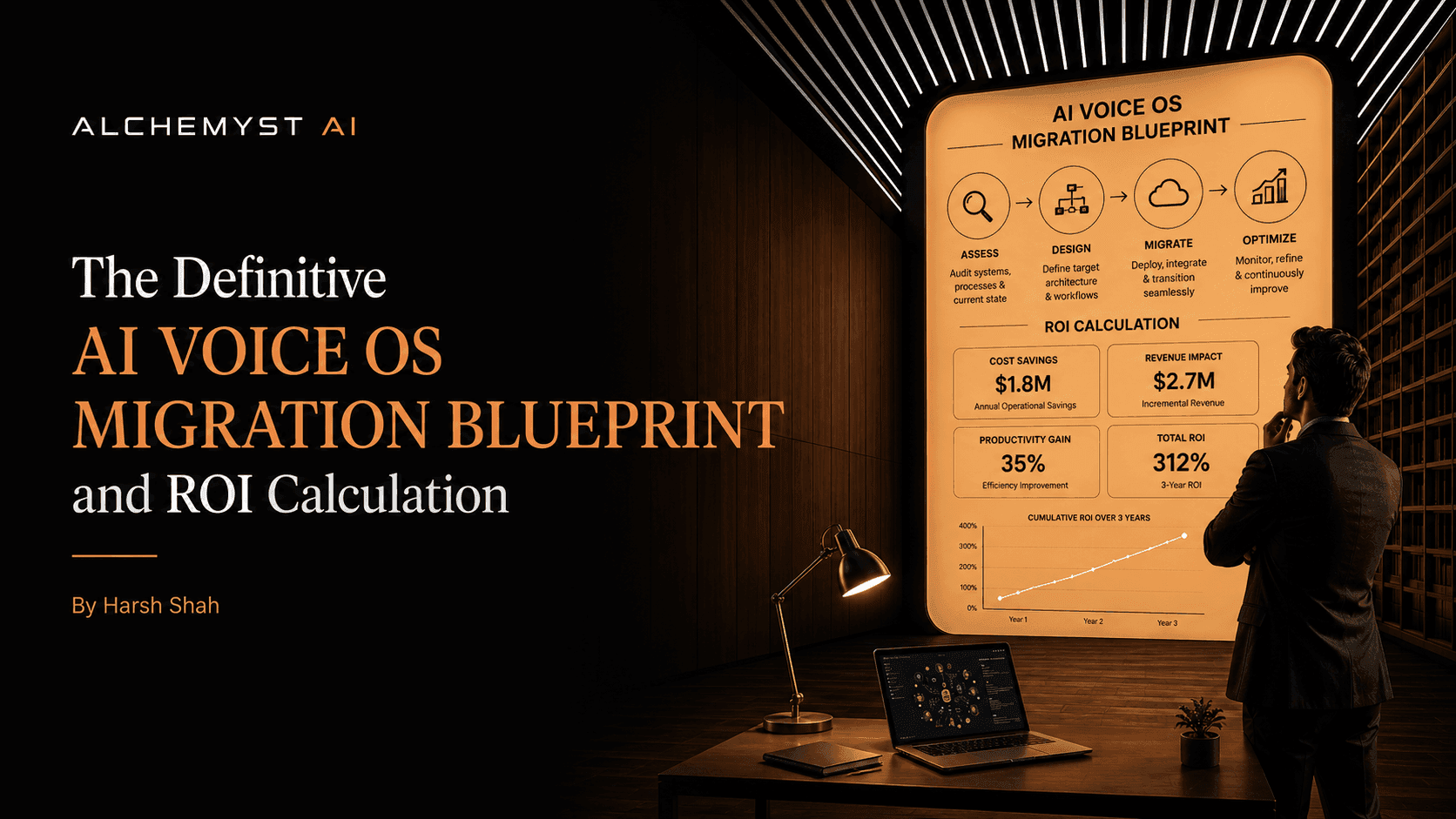

This definitive blueprint explores the technical, strategic, and financial imperatives of implementing an enterprise AI voice platform. We will dissect the architectural complexities of real-time data flow, contrast context engineering with traditional prompt engineering, outline the data governance and security protocols required for immediate data access, and provide a structured framework for calculating Return on Investment (ROI) based on qualified outcomes rather than vanity metrics.

AI Voice Agent vs. Enterprise AI Voice Platform: The Critical Distinction

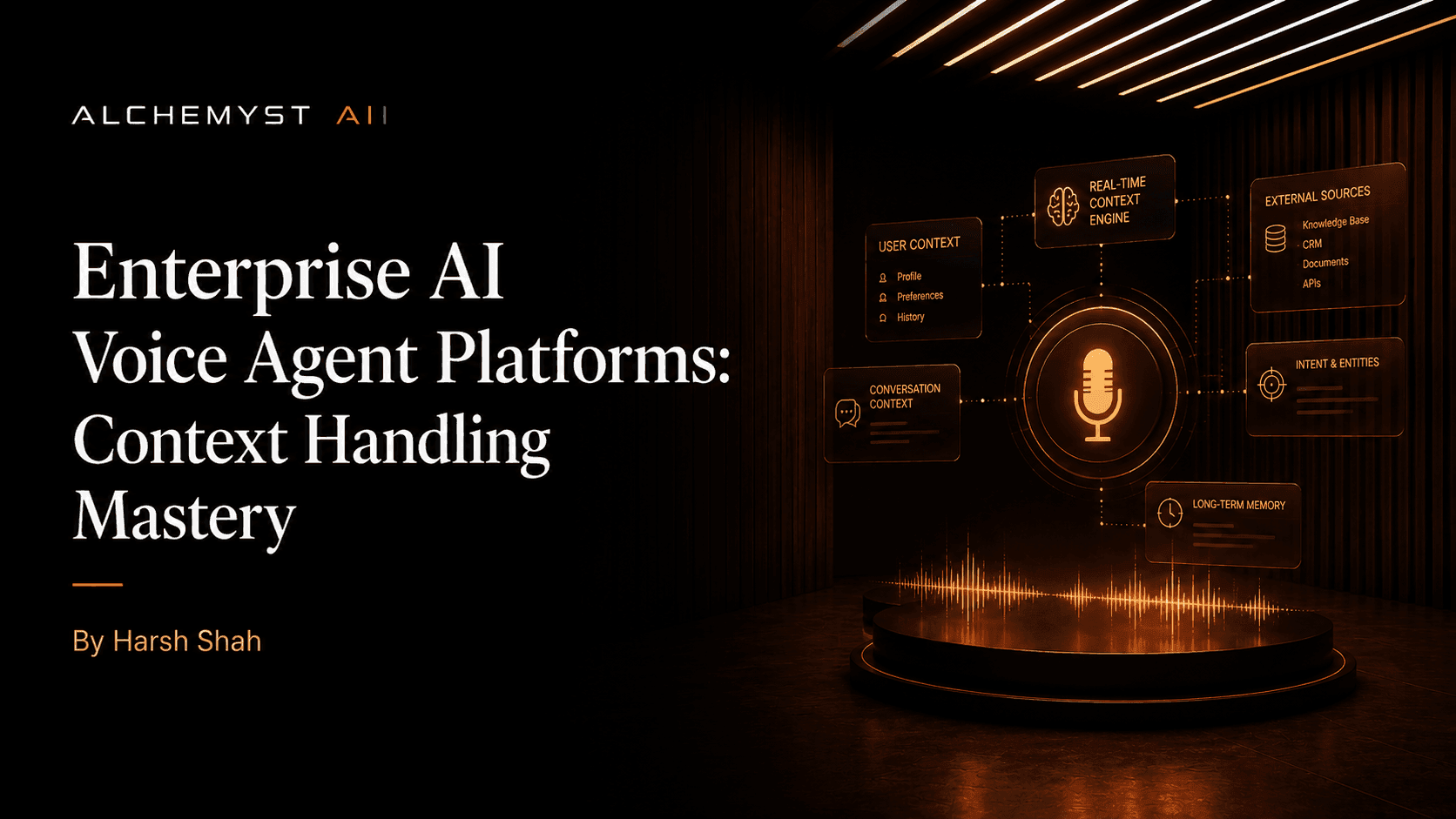

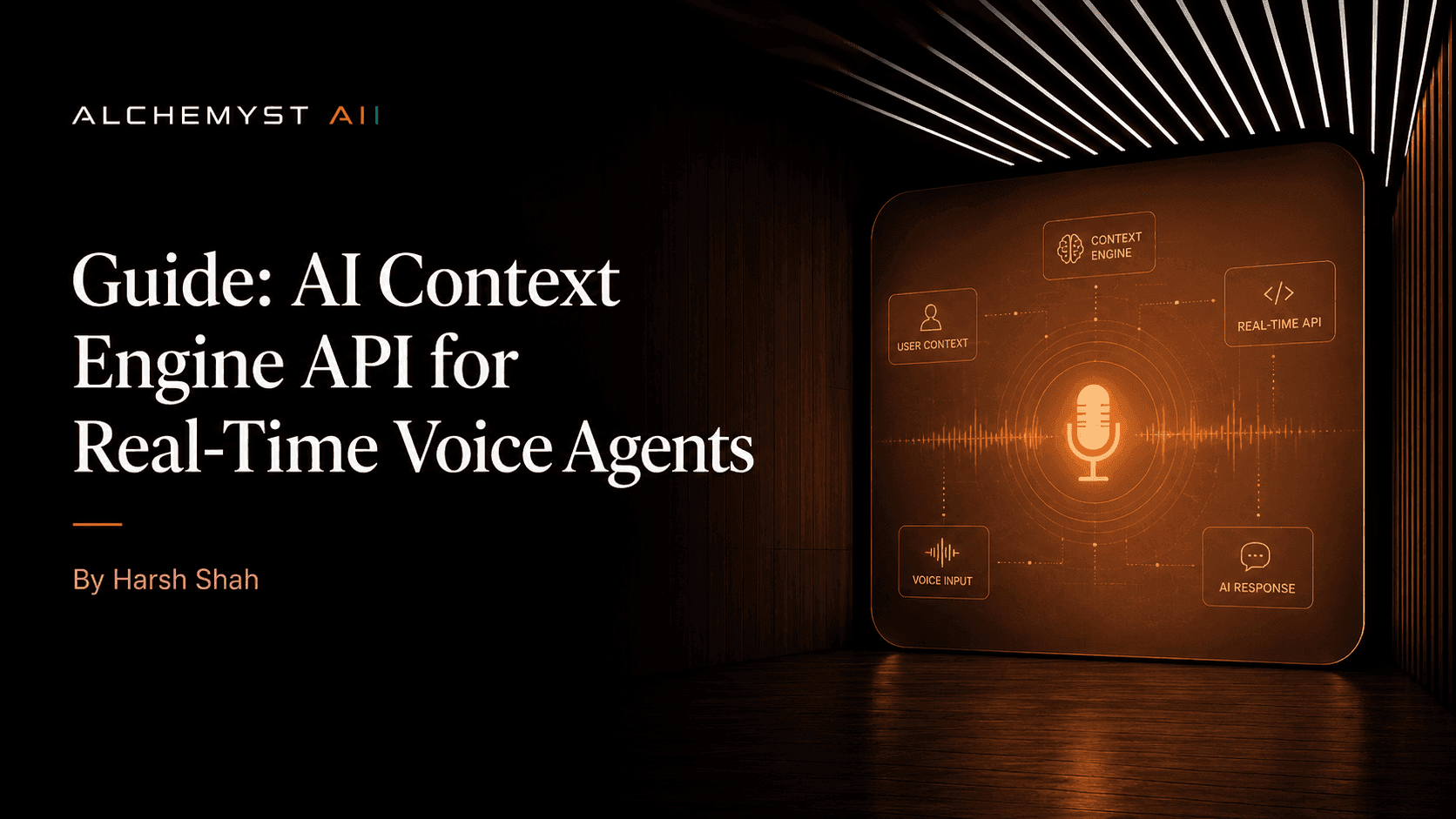

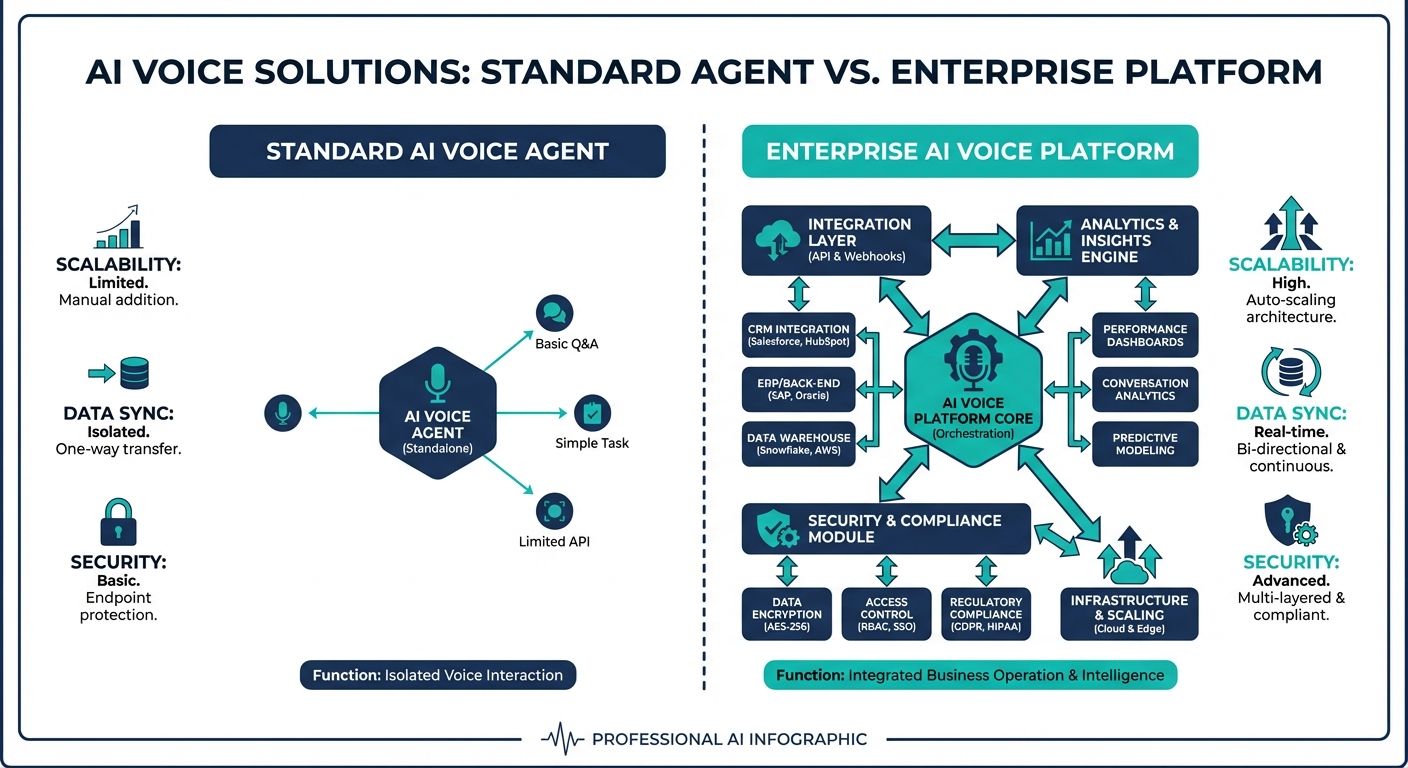

A common pitfall in commercial evaluations of conversational AI is conflating an isolated voice agent with a holistic enterprise platform. An AI voice agent is fundamentally a conversational interface—a combination of Speech-to-Text (STT), a Large Language Model (LLM), and Text-to-Speech (TTS) components. While these agents can handle basic interactions, they operate in a vacuum without an underlying architecture to supply them with dynamic, account-specific intelligence.

An enterprise AI voice platform, on the other hand, is an orchestration engine. It encompasses the voice agent but surrounds it with critical enterprise guardrails: real-time database connectors, state management, observability suites, security compliance layers, and advanced information retrieval systems. It is the platform that enables the agent to pause, query a CRM, retrieve a customer's recent support ticket, and dynamically alter its dialogue flow within milliseconds. Without this real-time CRM data integration, voice agents sound robotic, lack situational awareness, and ultimately frustrate customers by requiring them to repeat information.

The Strategic Imperative of Real-Time CRM Data Integration

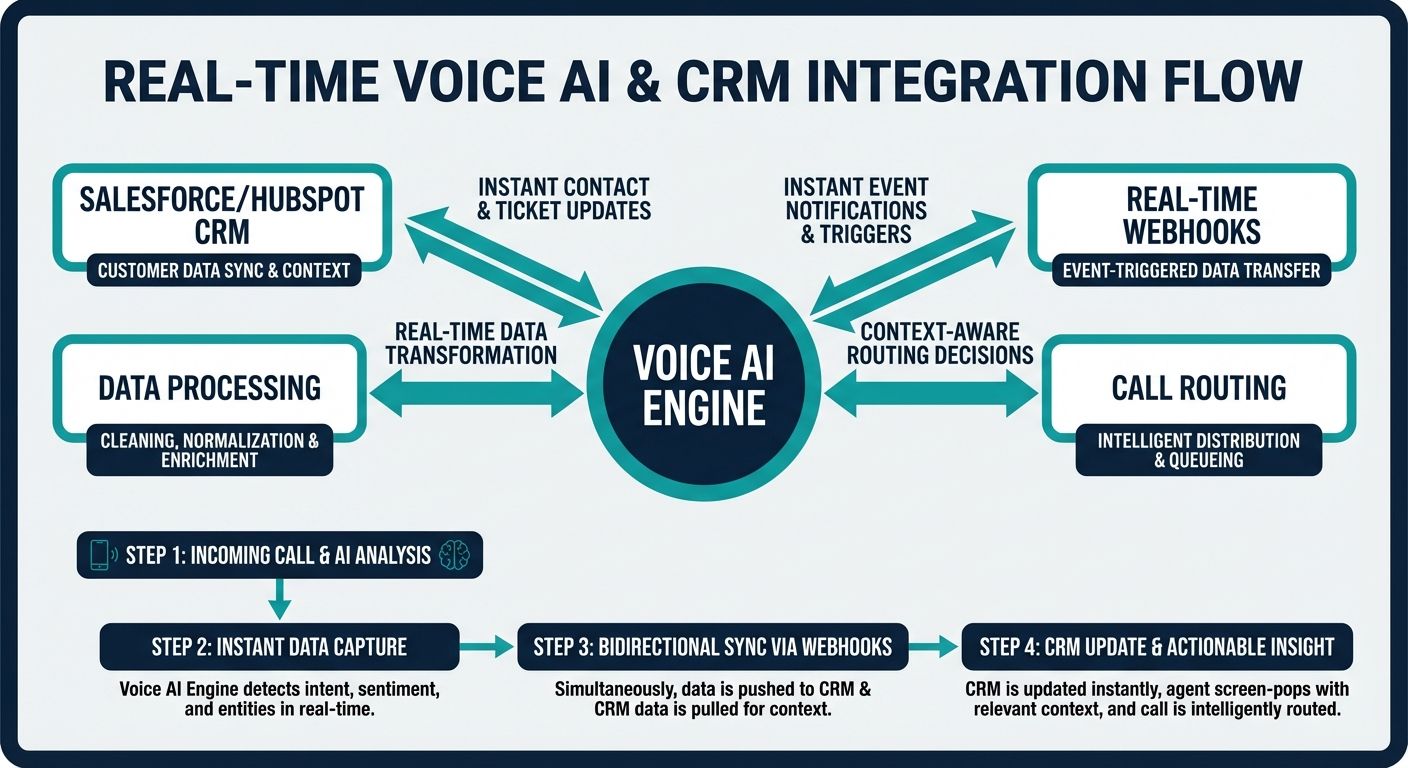

Batch processing and nightly data syncs are entirely inadequate for conversational AI. When a customer speaks to an AI representative, the AI must possess instantaneous access to the customer's entire historical and current lifecycle context. This is where real-time CRM data integration transforms the customer experience.

Consider a scenario where a user upgrades their subscription via a web portal while simultaneously calling support to confirm the change. If the AI relies on a delayed data pipeline, it will confidently—and incorrectly—state the customer is on the old plan. True real-time synchronization prevents these hallucinations and factual errors by utilizing live API queries as the ultimate source of truth. This real-time context-awareness bridges the gap between robotic automation and human-like consultative support.

Architectural Blueprint: Engineering Real-Time Data Flow

Achieving zero-latency retrieval in an enterprise environment requires a sophisticated technical architecture. Top-tier platforms employ event-driven architectures and bidirectional streaming to ensure that dialogue generation is never blocked by database queries.

API Strategies and Managing Data Latency

To architect an enterprise AI voice platform with real-time CRM data integration, developers must move beyond basic REST architectures. GraphQL and WebSockets are frequently employed to maintain persistent connections between the voice OS and the CRM (such as Salesforce, HubSpot, or custom enterprise solutions). When a user speaks, the STT engine streams the transcript to the orchestrator. Simultaneously, a parallel thread utilizes entity extraction to identify account identifiers and instantly fires a GraphQL query to the CRM. This concurrent processing ensures that by the time the LLM begins generating the semantic response, the CRM payload has already been returned and injected into the context window.

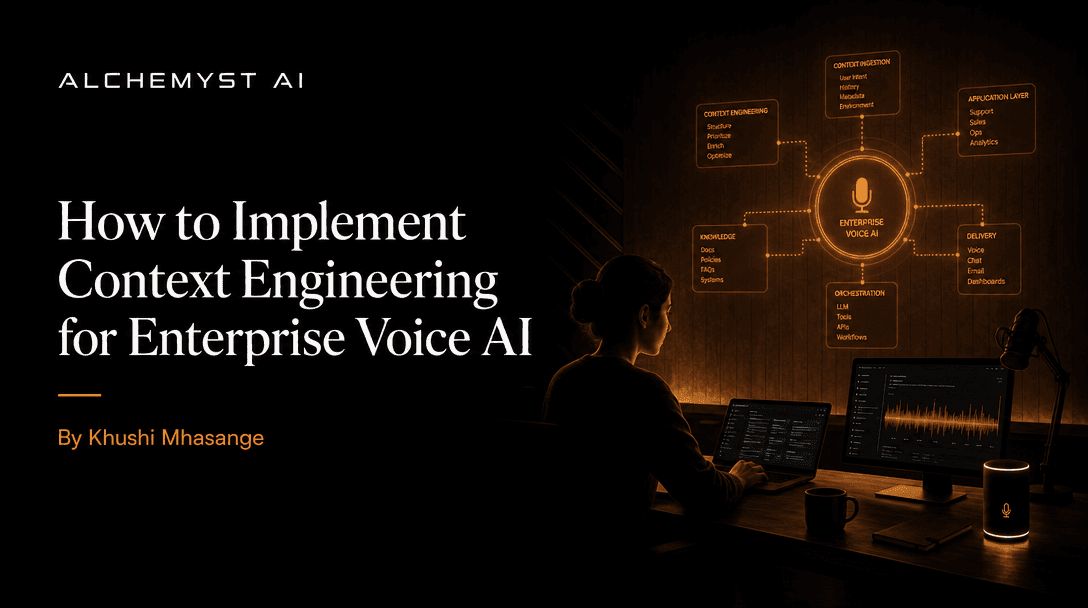

Alchemyst's Kathan Engine and Context Arithmetic

At the bleeding edge of this architectural paradigm is Alchemyst's Kathan engine, which introduces a computational process known as Context Arithmetic. Traditional platforms rely heavily on prompt engineering—tweaking the instructions given to the LLM. However, prompt engineering cannot solve the problem of missing information. Context engineering, powered by the Kathan engine, focuses on systematically determining the exact, most relevant slice of enterprise data to feed the agent at any given millisecond.

This is achieved through a rigorous five-stage set-algebraic pipeline:

- 1. Semantic Similarity Search: The user's spoken utterance is converted into vector embeddings, querying the enterprise knowledge base and CRM cache for conceptually related data.

- 2. Metadata Filtering: The retrieved dataset is filtered using hard constraints (e.g., matching the caller's unique CRM ID, regional compliance tags, and active subscription status).

- 3. Deduplication: Overlapping data chunks from various CRM fields or knowledge base articles are mathematically merged to prevent token waste and reduce LLM confusion.

- 4. Ranking: The remaining contextual data points are scored based on recency, relevance, and semantic weight.

- 5. Dynamic Injection: The highest-scoring context is instantaneously injected into the LLM's active memory buffer, allowing the text-to-speech engine to generate an accurate, highly contextualized audio response.

This approach effectively replaces rudimentary Retrieval-Augmented Generation (RAG) with a deterministic, mathematically sound pipeline tailored specifically for the rigorous latency demands of voice communication.

Technical Integration and Migration Strategy

Migrating from legacy IVR systems to a modern Enterprise AI voice platform requires a definitive blueprint. Haphazard deployments result in disconnected data silos and degraded customer trust. Technical evaluators must prioritize a structured migration strategy.

Step-by-Step Data Migration Blueprint

Phase 1: API Auditing and Endpoint Mapping. Before any voice agent is deployed, enterprise architects must audit their CRM APIs. This involves mapping out the exact endpoints required for reading customer states and writing call outcomes. Rate limits must be negotiated, and dedicated service accounts with granular permissions must be established.

Phase 2: Implementing Webhook Triggers. Real-time integration is not just about the AI reading data; it is about the AI updating the CRM mid-conversation. Webhook triggers must be configured so that if the AI agent successfully qualifies a lead or resolves a ticket, a payload is immediately pushed to the CRM, triggering downstream enterprise workflows without human intervention.

Phase 3: Conversational Memory and Chat History. Enterprise integration requires that the AI maintains session memory. Code-level implementation should involve utilizing fast-retrieval databases (like Redis) to store short-term dialogue turns, while long-term conversational summaries are asynchronously written back to the CRM's interaction logs for future reference by human agents.

Data Governance, Security, and Compliance in Real-Time

Instantaneous CRM data synchronization inherently introduces significant security considerations. When an AI voice platform queries a CRM in real-time, it accesses highly sensitive Personally Identifiable Information (PII) and Payment Card Industry (PCI) data. A robust enterprise platform must implement a Zero-Trust architecture.

Security protocols must dictate that data is encrypted both in transit (via TLS 1.3) and at rest. More critically for voice, the platform must execute Real-time PII Redaction. Before the user's spoken text is sent to an external LLM provider, intermediate middleware must scrub credit card numbers, social security numbers, and private account details. Furthermore, the AI must strictly adhere to the Principle of Least Privilege, meaning the API keys utilized by the voice platform should only have access to the exact CRM fields necessary to resolve the caller's immediate query, preventing potential data over-exposure.

Transformative Real-Time Use Cases

The distinction of an enterprise AI voice platform with real-time CRM data integration is most visible in its application. Here are concrete, industry-focused use cases where instant data synchronization revolutionizes operations:

- Dynamic Call Routing and Ticket Status: A customer calls support. Instantly, the platform cross-references the caller's phone number with the CRM. It identifies an open, high-severity support ticket. Instead of asking 'How can I help you?', the AI proactively states, 'Hello, I see you have an open ticket regarding your recent router outage. I have an update from the engineering team. Would you like to hear it?'

- Intelligent Cross-Selling and Sales Qualification: During an outbound sales call, a prospect mentions a specific pain point. The AI instantly queries the CRM for previous marketing materials the prospect has engaged with, adjusting its pitch in real-time to highlight features the prospect has already shown interest in, seamlessly writing the newly qualified data points back to the ATS or CRM.

- Automated Account Updates and Authentication: A user needs to change their billing address. The AI utilizes voice biometrics and multi-factor authentication via SMS, verifies the response, and instantly executes an API POST request to the CRM to update the address, confirming the change back to the user within seconds.

Redefining ROI: Cost Per Qualified Outcome

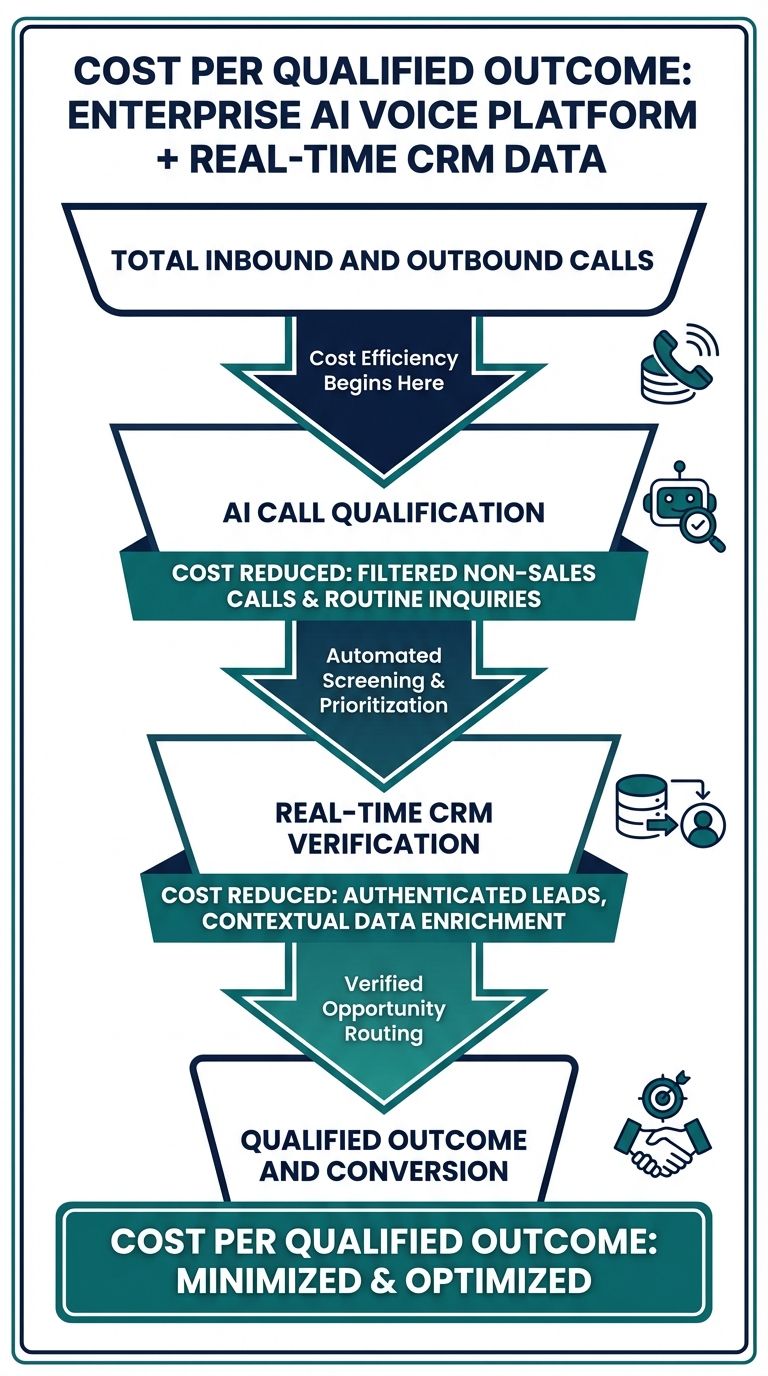

A comprehensive migration to an enterprise AI voice platform requires a structured ROI framework that extends far beyond generic cost savings. Historically, the voice AI industry has relied on deceptive pricing models based on per-minute, per-call, or per-seat structures. These metrics are fundamentally flawed because they reward inefficiency. If a basic, context-free AI agent takes ten minutes of frustrating dialogue to resolve a simple issue, the vendor makes more money, while the enterprise suffers.

The true metric for enterprise evaluation is Cost Per Qualified Outcome (CPQO). A qualified outcome is a definitive, valuable action: a closed ticket, a scheduled appointment, a verified address update, or a highly qualified sales lead pushed directly into the CRM.

Context-free agents artificially inflate expenses. Because they lack real-time CRM data integration, they ask redundant questions, misinterpret context, and frequently require costly escalations to human agents—negating the initial cost savings. In contrast, an enterprise AI voice platform utilizing context arithmetic directly reduces the time-to-resolution. By having instant access to the CRM truth, the AI handles the complex, real-world conversational variations that cause standard agents to fail.

When calculating ROI, businesses must factor in the reduction of human-agent handle time, the elimination of manual post-call data entry (since the AI writes back to the CRM instantly), and the increased conversion rates driven by hyper-personalized, context-aware interactions. The initial technical investment in architecting real-time synchronization pays dividends by transforming the voice channel from a cost center into a high-efficiency operational asset.

Conclusion

The transition to an enterprise AI voice platform with real-time CRM data integration represents a paradigm shift in automated customer communication. By moving away from basic prompt engineering toward rigorous context arithmetic and establishing low-latency, bidirectional data flows, enterprises can deploy voice AI that truly augments human capabilities. The definitive migration blueprint requires meticulous attention to API strategies, strict adherence to real-time data governance, and a fundamental shift in how ROI is calculated. For organizations willing to embrace this architectural complexity, the result is a massive competitive advantage: a conversational AI system that is not just heard, but inherently understands the complete context of every single customer.